As we move deeper into January 2026, the tech landscape has shifted from the “wild west” of early-stage experimentation into a refined, high-stakes industrial revolution. According to recent data from Gartner, global spending on IT and emerging technologies is projected to hit a staggering $6.2 trillion by the end of 2026, a 10% increase from the already record-breaking 2025.

We are no longer simply “excited” about the future; we are currently architecting the foundations of a new digital society. For tech leaders, the question has changed from “How does this tool work?” to “How do I secure and scale this within the next 12 months?” From the rise of autonomous agents that manage our workloads to the biological breakthroughs powering our servers, the pace of innovation has surpassed even the most aggressive 2025 tech predictions.

If you are navigating the current fiscal year, staying ahead requires more than a casual eye on headlines. You need a deep dive into the specific Future Tech Trends that are graduating from “theoretical labs” to “market dominance.”

1. The Pivot from LLMs to LMMs: Agentic Autonomy is the New Baseline

In late 2024 and 2025, we were impressed by the Large Language Model (LLM) that could write a clever poem or summarize a PDF. In the next 12 months, however, we are witnessing the complete dominance of Large Multimodal Models (LMMs) powered by Agentic Architecture.

The “Search Intent” for IT managers has moved from “How do I prompt AI?” to “How do I govern an autonomous AI workforce?”

We have entered the “Post-Prompt” era. Instead of human workers micro-managing AI conversations, we are seeing the rise of AI Agents. These are systems with “agency”—the ability to browse the web, access company APIs, manage cloud costs, and collaborate with other AI agents without constant human intervention.

- Workflow Integration: Over the next 12 months, your IDE will likely be managed by a junior-level agent that pre-builds feature branches before you even start your coffee.

- The Zero-UI Trend: AI is moving away from the “chat box” and becoming a background whisperer, managing smart homes, factory lines, and supply chains in real-time based on visual and sensor-based input.

2. Bio-Informatics and The “Living Cloud”: Why Biological Computing is the Ultimate Scalability Solution

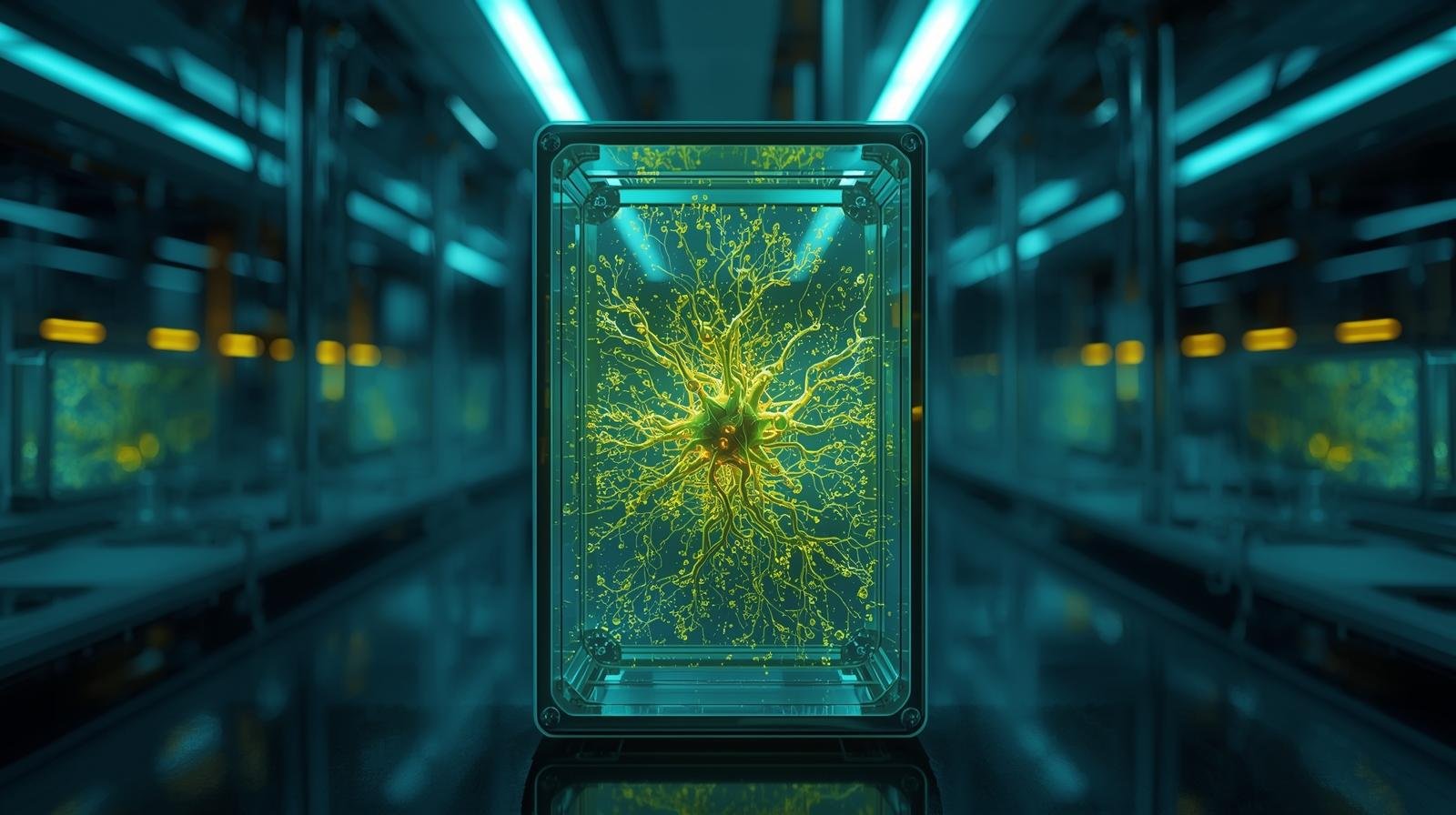

While Silicon dominated 2025, 2026 is becoming the year of the Bio-Digital Convergence. One of the most fascinating Future Tech Trends for the remainder of this year is the practical application of DNA-based data storage and bio-informatics.

For the first time, the “Physical Wall” of data center density is becoming a board-level concern. Silicon is reaching its thermal limit. As a result, we are seeing major tech giants piloting “Living Cloud” modules.

- The Efficiency Gap: Traditional servers are struggling with the heat generated by massive AI inference. Biological computing, specifically using synthetic DNA to store archival data, offers a solution that is millions of times more dense than SSD technology.

- Computing with Wetware: Beyond storage, the research into “Organoid Intelligence”—using lab-grown clusters of brain cells to perform pattern recognition tasks—is moving toward commercial viability. IT professionals who master the basics of biological programming will be the high-demand “Hybrid Architects” of 2027.

3. Sovereign AI: The Death of Global Centralization and the Birth of National Compute

If 2025 was defined by a few Big Tech companies providing intelligence to the world, the next 12 months will be defined by Sovereign AI. Nations have realized that relying on a foreign provider for “Intelligence-as-a-Service” is a strategic suicide.

Addressing the global “Search Intent” regarding data privacy and national security: Countries are now stockpiling GPUs the way they used to stockpile oil.

- Digital Borderlands: Governments in the EU, UAE, and Japan are aggressively funding domestic AI foundations. These “Sovereign Clouds” are trained on local languages, cultures, and legal frameworks, ensuring that sensitive citizen data never crosses an ocean.

- Decoupled Intelligence: For tech businesses, this means you can no longer build a “one-size-fits-all” application. The next 12 months will require software to be “geo-intelligent”—adapting to the different AI regulations and models enforced by national sovereign clouds.

4. Post-Quantum Security: Moving Toward “Quantum-Resistance” in Everyday Ops

We have reached a critical point in the “Q-Day” countdown. While a cryptographically relevant quantum computer might still be years away, the strategy of “Harvest Now, Decrypt Later” has made current encryption a liability today.

Throughout 2026, every IT leader must prioritize Post-Quantum Cryptography (PQC). The “Next 12 Months” strategy is about securing the perimeter before the threat becomes active.

- The Transition: Companies are now moving toward “Hybrid Encryption”—layering traditional AES/RSA with new, NIST-approved lattice-based mathematical algorithms.

- Digital Identity Revamped: Because AI can now clone voices and faces with 99% accuracy (Deepfakes), we are seeing a return to Web3/Blockchain roots. High-authority identity is being moved to decentralized, hardware-secured wallets. Seeing and hearing is no longer believing; only cryptographic verification is truth.

5. Circular Tech: The Era of Mandated Sustainability in AI Infrastructure

Finally, the industry is reckoning with its energy debt. The massive energy consumption required to run LMMs and autonomous data centers has triggered a “Sustainable Infrastructure” boom.

Google users are frequently asking: “How can AI be greener?” The next 12 months will provide the answer through Circular Tech.

- Thermal Recirculation: Instead of just “cooling” data centers, we are now “harvesting” the heat. Large-scale 2026 data centers are being built as municipal heaters, warming the local residential areas with the excess heat generated by GPU racks.

- Modular Hardware: We are moving away from the “e-waste” model. Future Tech Trends are favoring “Evergreen Hardware”—servers where individual chiplets can be swapped for AI-specific upgrades without throwing away the entire chassis. Sustainability is no longer a marketing line; it’s an engineering constraint.

Key Takeaways for the Next 12 Months:

- From Assistant to Agent: Stop hiring “chatbots.” Focus on integrating autonomous AI agents that have permission to execute workflows.

- Prioritize Sovereign Compliance: Ensure your 2026 tech stack is compatible with localized, national AI regulations and domestic clouds.

- Quantum Audit Today: Begin the migration to post-quantum encryption standards before your 2025-stored data becomes readable to hackers.

- Invest in Digital Identity: Traditional password and biometrics are falling. Move high-stakes company actions to hardware-locked, cryptographic wallets.

- Architect for Energy: In 2026, the best engineer is the one who designs the most “energy-per-inference” efficient system, not the biggest one.