If you wanted to understand the gravity of the current technological shift, you only need to look at one number: $3 Trillion. In 2024, NVIDIA’s market capitalization crossed that astronomical threshold, briefly making it the most valuable company on the planet. This wasn’t just a stock market rally; it was a global admission that we have entered a new era of computing where GPU power is the most precious commodity on earth.

For the uninitiated, NVIDIA used to be the company that made video games look pretty. Today, they are the sole gatekeepers of the AI Infrastructure powering every Large Language Model (LLM), autonomous vehicle, and protein-folding simulation in existence. We are no longer in a software boom; we are in a “Compute Supercycle.”

In this deep dive, we explore why NVIDIA has effectively “captured” the market, why sovereign nations are now stockpiling GPUs like nuclear warheads, and what this means for the future of global AI Infrastructure.

1. Beyond Graphics: How CUDA Created the Indestructible Moat

Most people think NVIDIA’s dominance is based on hardware. They see the H100 or the new Blackwell chips and think it’s just about having the fastest transistors. They’re only half right.

The true secret to NVIDIA’s monopoly is CUDA (Compute Unified Device Architecture). Launched in 2006, CUDA is the proprietary software layer that allows developers to use GPUs for general-purpose mathematical processing. For nearly two decades, every AI researcher, academic, and software engineer has built their libraries, tools, and frameworks on CUDA.

The result? If a competitor like AMD or Intel releases a faster chip today, they face a “Software Wall.” To switch to a different chip, a company like OpenAI or Meta would have to rewrite millions of lines of code. NVIDIA didn’t just build a better engine; they built the only roads that cars are allowed to drive on.

2. The Great Scarcity: GPU Power as the New Global GDP

In 2026, the wealth of a nation or a corporation is no longer measured just in oil reserves or gold; it’s measured in FLOPs (Floating Point Operations per Second).

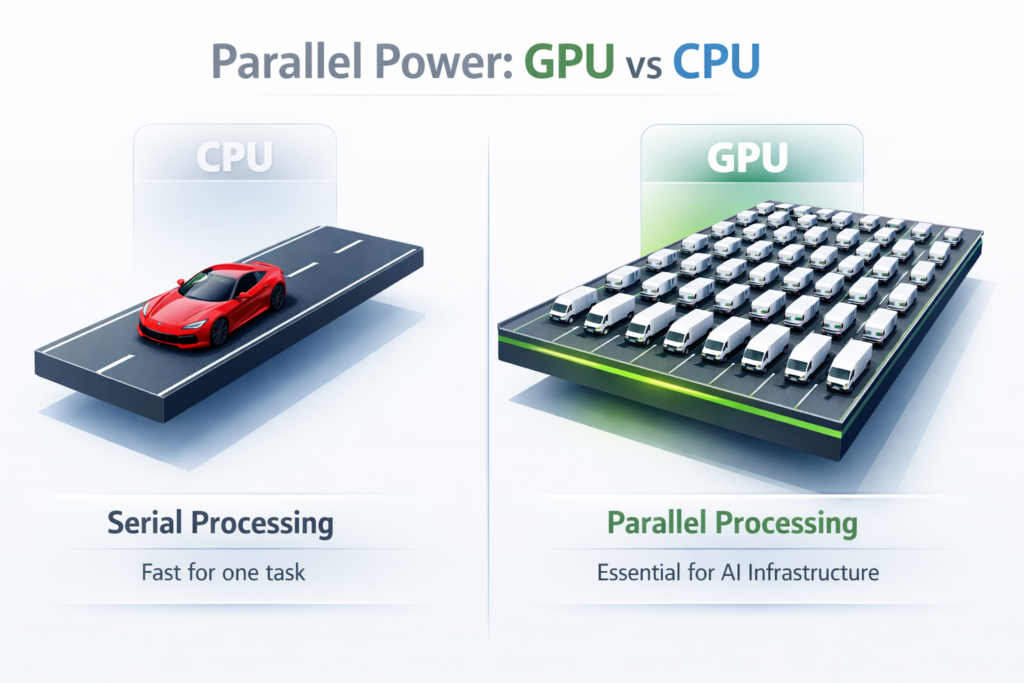

Modern AI Infrastructure is hungry. Training a model like GPT-5 (or its successors) requires tens of thousands of GPUs running in parallel for months. Because NVIDIA’s production capacity is limited by how fast companies like TSMC can manufacture their chips, we have reached a state of “GPU Scarcity.”

Search Intent Answer: Why is NVIDIA so dominant?

Google users often ask if NVIDIA is a monopoly. While technically they have competitors, they hold an estimated 90% market share in the AI data center space. When demand exceeds supply by 10x, the company that owns the supply sets the rules for the global economy. This scarcity has turned GPUs into a form of “currency,” with startups often trading GPU access for equity.

3. Designing the Next Epoch: From Hopper to Blackwell and Beyond

NVIDIA’s CEO, Jensen Huang, famously stated that “Moore’s Law is dead,” suggesting that traditional CPU advancement has slowed. In its place, he has proposed what many call “Jensen’s Law”: the idea that AI performance will double every six months.

The leap from the Hopper (H100) architecture to the Blackwell (B200) architecture proved this point. Blackwell isn’t just a chip; it’s an entire system designed to function as a giant, singular GPU.

- Performance: 5x the AI performance of Hopper.

- Efficiency: 25x less energy consumption per task.

- The Bottom Line: For companies building massive AI Infrastructure, Blackwell represents the difference between a project taking one year to train or one month.

4. The Sovereign AI Factor: Why Nations are Stockpiling Compute

One of the most significant trends in 2026 is the rise of “Sovereign AI.” Countries like Saudi Arabia, the UAE, Japan, and France have realized that relying on Silicon Valley’s “generic” AI is a national security risk.

These nations are spending billions to build their own domestic AI Infrastructure. They want models trained on their own languages, cultural values, and legal datasets. Because NVIDIA is the only provider that can deliver “ready-to-wear” data centers at scale, these nations have become NVIDIA’s most reliable customers.

Compute power has become the new Suez Canal. If you control the hardware that hosts a nation’s intelligence, you have a geopolitical leverage that was previously unimaginable for a hardware manufacturer.

5. Can the “NVIDIA-Killer” Chip Exist? (AMD, Intel, and ASICs)

The tech giants—Amazon (Trainium), Google (TPU), and Microsoft (Maia)—are tired of paying the “NVIDIA Tax.” Each of them is now designing their own custom AI chips (ASICs) to run their own models more cheaply.

Can they win?

- The Pro: Custom chips are highly efficient for specific tasks. If you only want to run a specific Google model, a Google TPU is fantastic.

- The Con: NVIDIA GPUs are general purpose. They are the Swiss Army Knives of the AI world. A startup doesn’t know what model it will be running next year, so it buys NVIDIA for flexibility.

AMD is making significant strides with its ROCm software (trying to bridge the CUDA gap), but for the foreseeable future, the “NVIDIA-killer” remains a hypothetical. NVIDIA isn’t standing still; they are out-innovating their rivals before the rivals can even catch up to last year’s tech.

Key Takeaways

- Software is the Moat: NVIDIA’s dominance isn’t just about silicon; it’s about the CUDA software ecosystem that developers have spent 20 years adopting.

- Compute as a Resource: Access to high-end GPUs is the single greatest bottleneck for AI progress today. AI Infrastructure is now a prerequisite for national competitiveness.

- Systems, Not Just Chips: NVIDIA has moved from selling individual graphics cards to selling “Whole-Rack” data center systems (like Blackwell), locking customers into a hardware/software bundle.

- ASICs are Growing: While cloud giants are building their own chips to save money, NVIDIA remains the gold standard for versatility and raw power.

- Sovereign Wealth Influence: Nations are now the primary buyers of compute, treating GPUs as critical infrastructure on par with energy grids or water systems.

Leave a Reply